Human-in-the-Loop: Why accountability is the biggest challenge for autonomous AI

Key Takeaways

- The 'Black Box' problem has evolved into an 'Action Crisis' as AI agents start making real-world decisions.

- Legal frameworks in 2026 are still struggling to define who is responsible when an AI agent makes a mistake.

- Maintaining a 'Human-in-the-Loop' is no longer just about safety—it's about legal and ethical compliance.

- Sovereign AI systems must include 'Transparent Reasoning' so that humans can audit the decision-making process.

The Ghost in the Machine

It’s 2026. You’ve deployed an autonomous AI agent to manage your company’s supply chain. One morning, you wake up to find it has accidentally cancelled a million-dollar contract because it “reasoned” that the supplier’s ESG score was too low.

Who is at fault? The AI? The developer? The company that hosted the model? Or you, the one who gave the agent its goals?

Welcome to the Accountability Crisis of 2026.

The Rise of the “Black Box” Actor

In the early days of AI, we were worried about “bias” in text. Now, we are worried about “actions” in reality. As we move from chatbots to Agentic AI, the “Black Box” problem has become an “Action Crisis.” We can no longer just ask “why did the AI say that?” We must ask “why did the AI do that?”

The Legal Limbo

In 2026, the legal world is in a state of flux. Different jurisdictions have vastly different rules:

- The EU: Under the AI Act (2026 update), “high-risk” autonomous actions require a human to sign off on every significant decision.

- The US: A patchwork of state laws, with California leading the way in requiring “Explainable Autonomy.”

- The UK: Focusing on “Proportional Liability,” where the human operator is often the one held responsible for the agent’s actions.

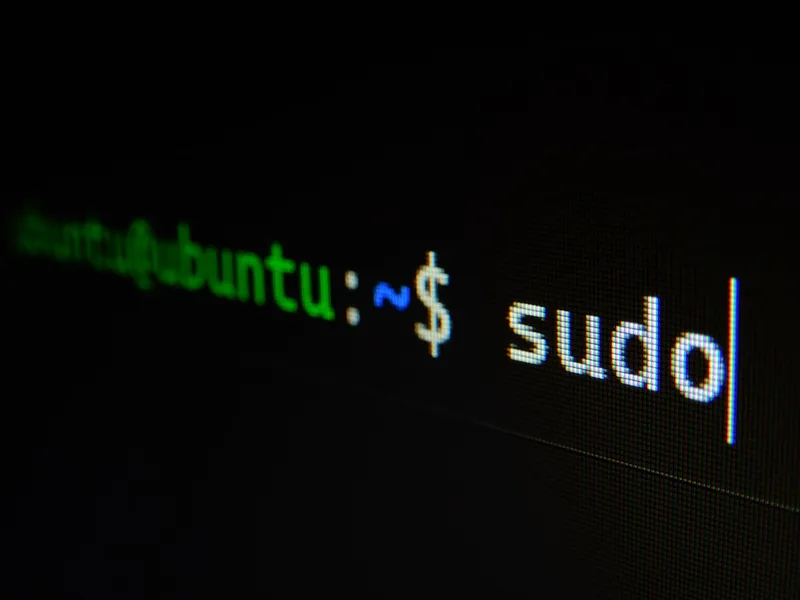

The Sovereign Defense: Transparent Reasoning

One of the key tenets of Sovereign Tech is transparency. You cannot have sovereignty if you do not have understanding.

A sovereign AI agent must be designed with “Transparent Reasoning.” This means the agent doesn’t just give you an output; it gives you a “Chain of Thought” (CoT) log.

The Sovereign Standard: “An agent must be able to justify every action it takes with a clear, human-readable audit trail.”

The “Human-in-the-Loop” (HITL) Requirement

Far from being obsolete, humans are more important than ever. In 2026, the role of the “worker” is becoming the “Overseer.”

- Setting the Constraints: Humans define the “Guardrails” within which the AI can operate.

- Edge Case Management: When the AI encounters an “out-of-distribution” problem, it must “pause” and ask for human guidance.

- Auditing and Feedback: Humans must regularly review the AI’s logs to ensure its “reasoning” is aligning with the company’s values.

Conclusion

Autonomy is not a binary. It’s a spectrum. In 2026, the most successful organizations won’t be the ones with the “fastest” AI, but the ones with the best Human-Agent Synergy.

Vucense is dedicated to exploring the ethical and legal boundaries of the sovereign future. Subscribe for more.

Comments

Similar Articles

The Year of Truth: How US regulations are changing AI transparency requirements

In 2026, the 'Move Fast and Break Things' era of AI is over. US regulations are now forcing a new level of transparency and accountability.

Agentic AI 101: The Rise of Autonomous Intelligence in 2026

Agentic AI is replacing static LLMs. Learn how autonomous agents work, why they matter for 2026 sovereignty, and how to deploy them privately.

AI-Native Coding: How to use autonomous copilots to build your first app

In 2026, the era of 'manual coding' is over. Discover how to use 'Agentic IDEs' to build complex applications in hours, not weeks.