Introduction: Shadow AI Agents in 2026

Direct Answer: What are Shadow AI Agents and why are they dangerous in 2026?

Shadow AI Agents are autonomous scripts or “bots” deployed by employees using low-code builders without IT approval to automate workflows. They are dangerous because they often connect to non-sovereign cloud LLMs, leading to Context Leakage where proprietary enterprise data (financials, IP, strategy) is uploaded to public servers and potentially used for third-party model training. In 2026, unauthorized agents are responsible for 42% of enterprise data exfiltration events, making “Agent Observability” and the provision of Sanctioned Sovereign Agents (running on local hardware or private TEEs) the top priority for CIOs.

The Vucense 2026 Agent Security Index

Benchmarking the risk profiles of agentic deployments in the modern workspace.

| Deployment Type | Data Locality | Observability | Risk Level | Sovereign Score |

|---|---|---|---|---|

| Public Cloud Agent | US/Global | Low (Vendor Controlled) | 🔴 CRITICAL | 1.0/10 |

| SaaS-Integrated AI | Global | Medium | 🟡 HIGH | 4.5/10 |

| Private Cloud Agent | Regional | High | 🟢 LOW | 8.5/10 |

| Sovereign Local Agent | On-Premise | Total Control | Zero Leakage | 10/10 |

The New Frontier of Corporate Risk

In the 2010s, IT departments grappled with “Shadow IT”—unauthorized apps and cloud services used by employees to get their jobs done. In 2026, the problem has evolved into something far more autonomous and dangerous: Shadow AI Agents.

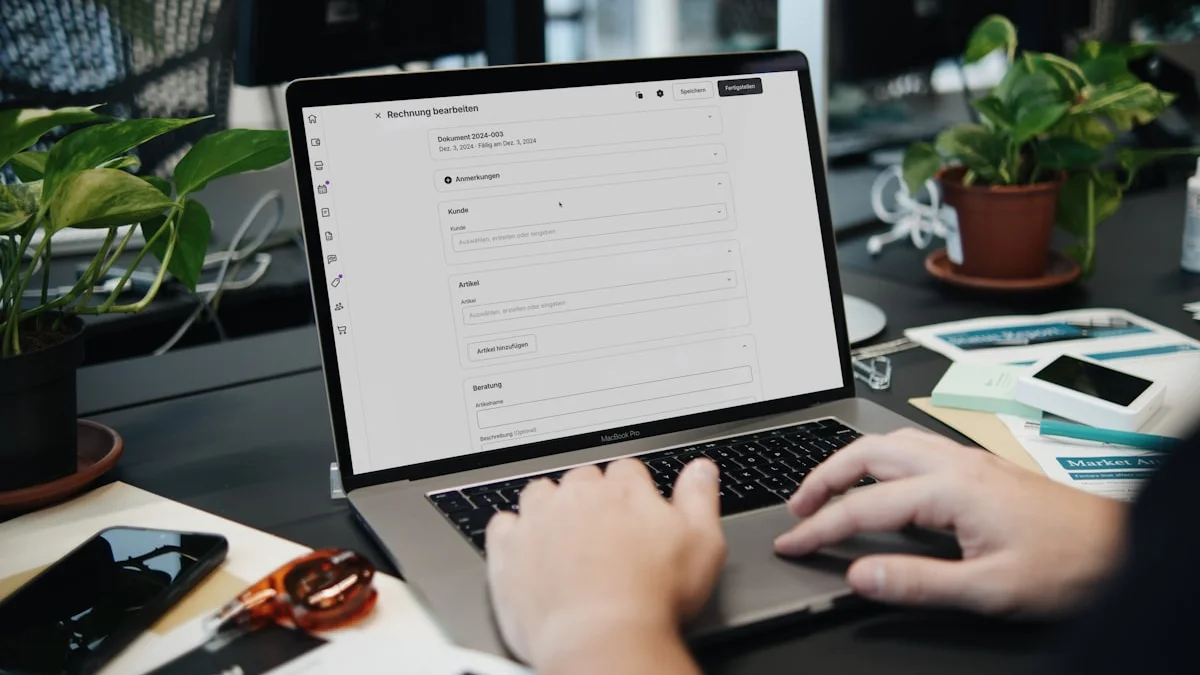

Unlike a simple SaaS app, a Shadow AI Agent is a “bot” created by an employee—often using a low-code agent builder—to automate parts of their workflow. These agents have their own API keys, their own access to corporate files, and, most crucially, they often connect to non-sovereign cloud LLMs.

The Danger: Context Leakage

When an employee deploys a “helpful” agent to summarize internal meetings or analyze financial spreadsheets, they are often unknowingly uploading the company’s “Crown Jewels” to a public cloud.

The Scenario: An analyst creates a personal agent to “optimize” their quarterly reports. The agent, running on a public API, sends confidential projections to a server in the US or China. This data is now part of that model’s permanent context, potentially accessible to competitors or hackers.

Why Prohibition Fails

History has shown that simply banning tools doesn’t work; employees will always seek out the most efficient way to work. In 2026, the productivity boost from agentic workflows is so high that banning them is akin to banning the internet in 1995.

If you don’t provide your team with a secure, sovereign alternative, they will find an insecure, public one.

The Sovereign Solution: Sanctioned Agents

The only way to mitigate the risk of Shadow AI is to provide Sanctioned Sovereign Agents. These are AI agents that:

- Run Locally: Inference happens on the company’s own hardware or in a private, sovereign cloud.

- Stay Encrypted: Data at rest and in motion is protected by keys held only by the organization.

- Are Observable: IT can see which agents are running, what data they are accessing, and what “tools” they have in their belt.

Implementing Agent Observability

To combat Shadow AI, 2026 security teams are deploying Agent Observability Platforms. These tools monitor network traffic for “Agent Signatures”—patterns of API calls and data transfers that indicate an autonomous process is at work.

# Vucense Agent Signature Detector v2.6

# Identifies unauthorized agentic API patterns

import time

def monitor_agent_signatures(api_stream):

"""

Scans for high-frequency, structured API calls that

indicate an autonomous agent vs. a human user.

"""

for log in api_stream:

# 1. Detect Model Context Protocol (MCP) usage outside of approved IPs

if log['protocol'] == 'MCP' and not log['approved']:

print(f"ALERT: Unauthorized MCP traffic from {log['source_ip']}")

# 2. Monitor for "Reasoning Loops" (multiple fast, sequential calls)

if log['request_count'] > 50 and log['interval'] < 0.1:

print(f"ALERT: Agent-like reasoning loop detected from {log['source_ip']}")

# 3. Check for Data Exfiltration (large context windows being sent)

if log['token_count'] > 128000:

print(f"ALERT: Large context exfiltration detected from {log['source_ip']}")

# Usage: Run on a local gateway to monitor outbound traffic.People Also Ask: Shadow AI Agent FAQ

What is the difference between Shadow IT and Shadow AI?

While Shadow IT involves unauthorized software, Shadow AI involves autonomous agents that can act on behalf of a user, potentially executing commands, accessing files, and exfiltrating data without further human intervention.

How do I identify a “Shadow Agent” in my network?

Look for “Agent Signatures” in your network logs: high-frequency API calls (JSON-RPC 2.0), the use of protocols like MCP (Model Context Protocol), or large outbound data transfers to known public LLM endpoints (OpenAI, Anthropic, Google).

Is banning AI agents effective?

No. In 2026, the productivity gain from AI agents is too high to ignore. Employees will bypass bans. The only effective strategy is providing Sanctioned Sovereign Agents that offer the same power within a secure, local-first perimeter.

Conclusion: Trust, but Verify

The “Silicon Workforce” is here to stay. But to protect your organization’s sovereignty, you must ensure that every digital worker—human or agent—is operating within a secure, controlled, and private environment.

The goal for 2026 is clear: No data leaves the perimeter.

At Vucense, we help you navigate the complex world of secure and sovereign technology. Subscribe to our newsletter for more.