Key Takeaways

- Breaking today. Mistral AI announced $830 million in debt financing on March 30, 2026 — the largest debt raise by a European AI company and Mistral’s first ever debt financing since its founding in April 2023.

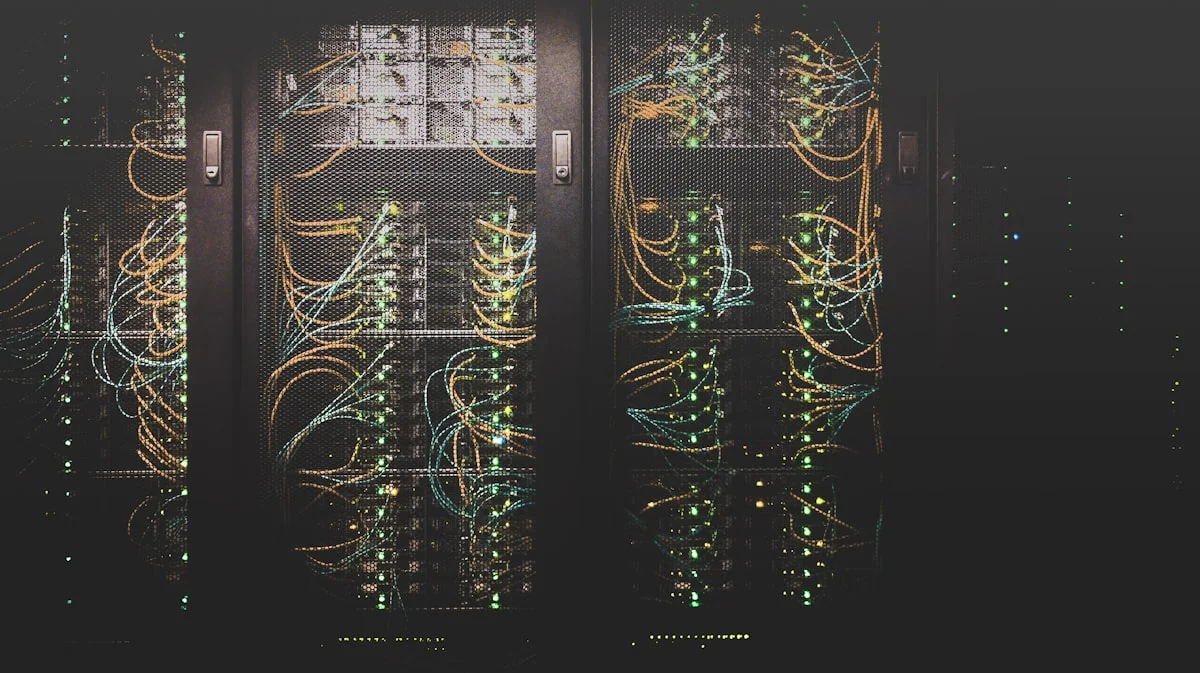

- 13,800 Nvidia GB300 GPUs. The Paris-area data centre at Bruyères-le-Châtel will be powered by Nvidia’s Grace Blackwell infrastructure, delivering 44MW of compute capacity when it opens in Q2 2026.

- Seven-bank consortium. BNP Paribas, Crédit Agricole CIB, HSBC, MUFG, Natixis CIB, La Banque Postale, and Bpifrance — traditional European banks financing AI infrastructure signals a structural shift in how the market views European AI companies.

- 200MW target by 2027. This facility is the first step. Mistral has separately announced a 1.4 gigawatt campus near Paris with MGX (Abu Dhabi’s $100B AI fund), plus a 1.4 billion euro Sweden expansion.

What Happened Today

Mistral AI announced on Monday, March 30, 2026 that it had completed an $830 million debt raise — its first ever — to finance a data centre near Paris. The facility, located in Bruyères-le-Châtel south of Paris at a site owned by French data centre firm Eclairion, will house 13,800 Nvidia GB300 GPUs and deliver 44 megawatts of compute capacity.

The company plans to have the facility operational by the end of Q2 2026.

This is a significant strategic shift for Mistral. Since its founding in 2023, the company has relied on cloud providers — Microsoft Azure, Google Cloud, and CoreWeave — for GPU access and model serving. The Paris data centre marks Mistral’s move toward owning its compute stack rather than renting it.

CEO Arthur Mensch stated in the announcement: “Scaling our infrastructure in Europe is critical to empower our customers and to ensure AI innovation and autonomy remain at the heart of Europe.”

Direct Answer: What did Mistral AI announce on March 30, 2026? Mistral AI announced it has raised $830 million in debt financing — its first ever debt raise — from a consortium of seven banks including BNP Paribas, HSBC, and MUFG, to build a data centre near Paris at Bruyères-le-Châtel. The facility will be equipped with 13,800 Nvidia GB300 GPUs and deliver 44 megawatts of compute capacity, with operations expected to begin in Q2 2026. The financing is part of Mistral’s broader plan to own 200 megawatts of compute capacity across Europe by the end of 2027.

Why Seven Banks Matters More Than the Number

The $830 million figure is significant. But the structure of the deal — seven traditional European banks providing debt financing — is the more important signal.

Banks are conservative creditors. Unlike venture capital, which accepts high risk for high return, debt financing requires creditors to believe they will be repaid from operating cash flows. The participation of BNP Paribas, Crédit Agricole CIB, HSBC, MUFG, Natixis CIB, La Banque Postale, and Bpifrance in a single $830M facility signals that European institutional finance now considers Mistral’s revenue trajectory bankable.

This is a first. No European AI startup has previously accessed this level of institutional debt financing. The closest comparison is the venture rounds raised by US AI labs — but those are equity, not debt, meaning the investors are betting on an exit, not on operating cash flows.

Mistral’s ARR reaching $400 million in February 2026 — up from $20 million just twelve months earlier — is what made the banks comfortable. A company growing revenue 20× in a year, with clear enterprise demand and a pathway to $1 billion ARR, can service debt.

The Infrastructure Context: Three Mistral Bets Running in Parallel

The Bruyères-le-Châtel facility is one of three infrastructure investments Mistral is building simultaneously:

1. Paris (now announced) — $830M, 44MW, 13,800 GB300 GPUs Operational Q2 2026. Near-term compute for inference and model training. Uses existing Eclairion data centre site.

2. Sweden — €1.4 billion investment announced February 2026 Longer-term capacity build. Part of Mistral’s multi-country European compute footprint strategy.

3. The 1.4GW Paris Campus — with MGX, Bpifrance, Nvidia Announced in March 2026. A dramatically larger facility targeting 1.4 gigawatts of capacity. Construction expected to begin H2 2026, operations by 2028. This is the largest AI infrastructure project in European history.

The three bets together represent Mistral moving from a model company to a full-stack AI infrastructure company — models, serving, training, and cloud services all owned rather than rented.

The Sovereignty Calculation

The move away from hyperscaler dependency is explicitly framed as a sovereignty decision, not just a cost decision.

Mistral’s LinkedIn statement: “Our objective is to secure 200MW of capacity across Europe by the end of 2027 to support the demand from governments and enterprises that seek to build and control their own AI.”

In 2026, “building and controlling your own AI” means three things for European governments and enterprises:

GDPR compliance by architecture. Data processed on European infrastructure under European law, rather than on US hyperscaler infrastructure subject to the US CLOUD Act.

AI Act readiness. The EU AI Act’s high-risk AI provisions require auditability and documentation of AI systems. Running on owned European infrastructure simplifies compliance considerably versus renting capacity from a US provider.

Strategic autonomy. The European Commission’s Digital Decade programme explicitly targets reducing dependency on non-EU technology infrastructure. Governments procuring AI services from a French company running on French infrastructure is a materially different sovereignty posture than procuring from US hyperscalers.

The demand signal is real. Mistral’s ARR growth from $20M to $400M in twelve months is not coming from individual developers — it is coming from enterprises and governments that are specifically seeking European AI providers for regulatory and strategic reasons.

Mistral vs the US AI Labs: The Funding Reality

Mistral is Europe’s best-funded AI company. But the comparison with US counterparts is stark:

| Company | Total Funding | Annual Revenue (2026) |

|---|---|---|

| OpenAI | $180 billion | $25B+ ARR |

| Anthropic | $59 billion | $19B ARR |

| Mistral | $2.9B equity + $0.83B debt | $400M ARR |

Mistral is operating with roughly 1.5% of OpenAI’s total funding while pursuing comparable infrastructure ambitions. The debt route — funding hard assets (GPUs) with debt rather than diluting equity — is a rational response to this capital constraint. GPUs are collateral; they hold value. A bank can lend against them in a way it could not lend against a pure software company.

The question is whether $3.7 billion in total capital (equity plus today’s debt) is sufficient to build the infrastructure required to genuinely compete with US providers for enterprise AI workloads. The answer is probably not at the hyperscaler level — but it may be sufficient to dominate the specifically European market, which values sovereignty over price optimisation and has regulatory structures that create structural advantages for European providers.

What This Means for Mistral Small 4 and Future Models

The timing connects directly to Mistral’s March 16 release of Mistral Small 4. That model requires 4× NVIDIA H100 GPUs minimum for self-hosting — infrastructure that most European enterprises do not have. Mistral’s own cloud serving has been constrained by its reliance on rented capacity.

The Bruyères-le-Châtel facility, once operational in Q2 2026, gives Mistral the owned compute to:

- Serve Mistral Small 4 inference at scale without renting from US hyperscalers

- Train the next generation of models — Mistral Large 4 and beyond — on owned infrastructure

- Offer European enterprises and governments genuinely sovereign AI hosting, where the data never touches non-EU infrastructure

For users who currently access Mistral models via the API at $0.15/1M input tokens, the immediate practical impact is reliability — less capacity constraint, more consistent throughput. The longer-term impact is the ability to offer data residency guarantees that cloud-hosted Mistral currently cannot provide.

The European AI Funding Environment in 2026

Mistral’s debt raise is the largest single financing by a European AI company, but it is part of an accelerating pattern:

- Nscale (UK, AI data centres): $2 billion raised in 2026

- Wayve (UK, autonomous driving AI): $1.2 billion raised in 2026

- AMI Labs (France): $1 billion raised in 2026

- Mistral (France): $830 million debt + $2.1B previous equity

The pattern represents European institutional capital — both venture and now debt — treating AI infrastructure as a bankable asset class. This is a structural shift from 2024, when European AI funding was viewed as a rounding error compared to US rounds.

The EU AI Act’s risk categorisation, the GDPR compliance requirements, and European governments’ explicit preference for European AI providers are creating a protected market that makes European AI infrastructure investable in a way it was not previously.

FAQ

When will the Paris data centre be operational? Mistral expects operations to begin by the end of Q2 2026 — by the end of June.

What are Nvidia GB300 GPUs? The Nvidia GB300 is the Grace Blackwell Ultra chip — Nvidia’s current flagship data centre GPU. Each unit combines one central processing unit with two Blackwell Ultra graphics processors containing 208 billion transistors on a 4-nanometer process. They are Nvidia’s most capable inference and training accelerators currently available.

Why use debt rather than equity? GPUs are physical assets with resale value — banks can use them as collateral in a way they cannot with software or model weights. Debt financing avoids further equity dilution, preserving ownership stakes for existing investors and employees. It also signals revenue-based creditworthiness, which is a more credible funding signal than venture valuation alone.

Is Mistral profitable? Not disclosed publicly. With $400M ARR and a $2.9B equity raise, Mistral is in a growth phase where capital is being deployed into infrastructure. The debt financing specifically targets hard asset acquisition (GPUs) rather than operating costs, suggesting the company views its operating business as cash-generative enough to service the debt.

How does this compare to US hyperscaler AI infrastructure? Microsoft is investing $80 billion in AI infrastructure in 2026 alone. Google’s 2026 capex guidance is $75 billion, primarily for AI infrastructure. Amazon Web Services is on a similar trajectory. Mistral’s 44MW Paris facility is a small fraction of any single US hyperscaler’s quarterly infrastructure spend — but it is specifically targeting the European sovereign AI market, where US hyperscaler offerings face structural disadvantages.

Related Articles

- Mistral Small 4: Europe’s Most Capable Open-Source AI Model

- Europe’s Digital Divorce: Why the EU Is Building Its Own Tech Stack in 2026

- SoftBank Borrows $40 Billion to Bet on OpenAI: What the Loan Signals About an IPO

- TurboQuant Explained: Google’s Extreme AI Compression for Local Inference

- Ollama Hits 52 Million Downloads: Local AI Is No Longer Niche