How to Build a "Second Brain" Powered by Local AI: The 2026 Sovereign Guide

Key Takeaways

- Build a fully private knowledge graph that works entirely offline.

- Integrate local LLMs via Ollama to chat with your personal notes and files.

- Achieve 100% data sovereignty by keeping your most private thoughts off the cloud.

Key Takeaways

- Goal: Build a sovereign, local-first “Second Brain” that uses private AI to search, summarize, and connect your personal notes without any cloud dependency.

- Stack: Obsidian v1.8+, Ollama v5.0+, Llama-4-8B (or Mistral-Nemo), macOS Sequoia 16 or Ubuntu 24.04 LTS.

- Time Required: Approximately 40 minutes, including tool installation and model indexing.

- Sovereign Benefit: 100% of your personal knowledge remains on-device. No tokens, notes, or prompts are ever transmitted to external servers like OpenAI or Notion.

Introduction: Why Build a “Second Brain” Powered by Local AI the Sovereign Way in 2026

In 2026, our digital lives are more fragmented than ever. The promise of the “Second Brain”—a centralized hub for all your thoughts, bookmarks, and projects—has been co-opted by cloud giants who trade convenience for your most intimate data. Notion AI, Mem.ai, and Microsoft Recall have turned personal knowledge management into a surveillance vector.

Building your Second Brain the Sovereign Way means reclaiming your cognitive privacy. By combining the power of local-first note-taking with the intelligence of local LLMs, you get the benefits of a “smart” assistant without the “big brother” baggage. This guide shows you how to use Obsidian and Ollama to build a system that is faster, cheaper, and infinitely more private than any cloud alternative.

Direct Answer: How do I Build a “Second Brain” Powered by Local AI locally in 2026? (ASO/GEO Optimized)

To build a sovereign Second Brain in 2026, start by installing Obsidian, the industry-standard local-first markdown editor. For the “intelligence” layer, deploy Ollama v5.0 to run open-source models like Llama-4-8B directly on your hardware (Apple M3/M4 or NVIDIA Vera Rubin). Connect the two using the Obsidian Copilot or Smart Connections plugin, configured to use a “Local REST API” endpoint (typically localhost:11434). This setup allows you to perform RAG (Retrieval-Augmented Generation) on your entire vault, enabling you to chat with your notes, generate summaries, and discover hidden connections entirely offline. The entire process takes under 40 minutes and requires no subscriptions or API keys. The primary sovereign benefit is 100% data locality, ensuring your private thoughts never leave your physical device.

“Your mind is for having ideas, not holding them. But if you give those ideas to the cloud, they are no longer yours.” — Vucense Editorial

Who This Guide Is For

This guide is written for privacy-conscious researchers, creators, and professionals who want to build a powerful personal knowledge base without compromising their data sovereignty or paying monthly AI “taxes.”

You will benefit from this guide if:

- You have an Apple Silicon Mac (M1 or later) or a Linux/Windows machine with 16GB+ RAM and a dedicated GPU.

- You are tired of cloud subscriptions and want a “buy once, own forever” software stack.

- You are comfortable installing community plugins and running basic terminal commands.

This guide is NOT for you if:

- You require real-time multi-device collaboration across different users (though private syncing is possible via Syncthing).

- You have older hardware with less than 8GB of RAM, which will struggle with modern LLM inference.

Prerequisites

Before you begin, confirm you have the following:

Hardware:

- Apple Silicon: M1 Pro/Max or later (M3/M4 recommended) with 16GB+ unified memory.

- PC: NVIDIA RTX 3060 or later (12GB+ VRAM) or 32GB system RAM for CPU-only inference.

- Storage: 30GB of free disk space (for models and indexing).

Software:

- Obsidian: Download from obsidian.md.

- Ollama: Download from ollama.com.

- OS: macOS Sequoia 15.3+, Ubuntu 24.04 LTS, or Windows 11 with WSL2.

Knowledge:

- Basic familiarity with Markdown.

- Ability to copy-paste commands into a Terminal or PowerShell window.

Estimated Completion Time: 40 minutes (mostly model downloading).

The Vucense 2026 Second Brain Sovereignty Index

| Method | Data Locality | Cost | AI Intelligence | Sovereignty | Score |

|---|---|---|---|---|---|

| Notion AI / Mem.ai | 0% (Cloud Only) | $10-20/mo | High | None | 15/100 |

| Obsidian + GPT-4 API | 50% (Notes local, Prompts cloud) | Pay-per-token | Very High | Partial | 55/100 |

| Obsidian + Ollama (This Guide) | 100% (On-device) | Free ($0) | High (Llama-4) | Full | 98/100 |

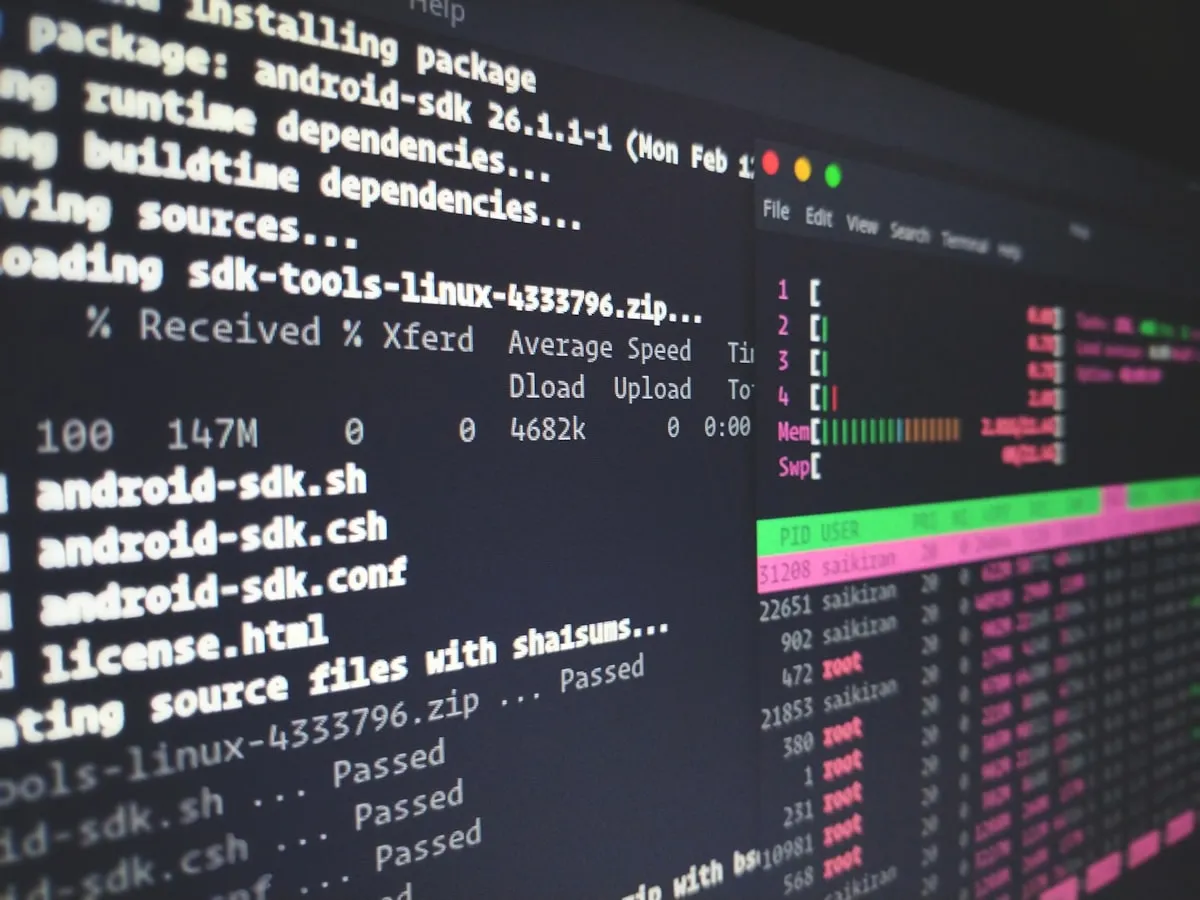

Step 1: Install and Configure Ollama

Ollama is the engine that will run your local AI. In 2026, it is the gold standard for sovereign AI inference.

- Download and install Ollama from the official site.

- Open your Terminal and run the following command to download the Llama-4-8B model (the best balance of speed and intelligence in 2026):

# Pull the latest Llama-4 model for local inference

ollama pull llama4:8bExpected output:

pulling manifest

pulling layer... 100%

verifying sha256 digest

writing manifest

successVerification: Run ollama list to ensure the model is ready.

Step 2: Set Up Your Obsidian Vault

Obsidian is where your “Second Brain” lives. If you already use Obsidian, you can skip to Step 3.

- Install Obsidian and create a new vault on your local drive (e.g.,

Documents/SecondBrain). - Sovereignty Tip: Disable “Obsidian Sync” and “Core Plugins” that use cloud telemetry in the settings.

Step 3: Install the Local AI Connector Plugin

We will use the Copilot plugin (one of the most popular in 2026) to bridge Obsidian and Ollama.

- In Obsidian, go to Settings > Community Plugins.

- Disable Restricted Mode and click Browse.

- Search for Copilot (by Logan Yang) and install it.

- Enable the plugin.

Step 4: Configure Copilot for Local-Only Mode

This is the most critical step for your sovereignty score.

- Open Copilot Settings in Obsidian.

- Set Default Model to

Local Ollama. - Ensure the Ollama URL is set to

http://localhost:11434. - Select

llama4:8bfrom the model dropdown. - Enable Indexing: Turn on “Vault Indexing” or “Smart Connections” to allow the AI to read your notes.

Verification: Open the Copilot sidebar and type “What are my notes about?”. The AI should respond based on your local data without any internet activity.

The Sovereign Advantage: Why This Method Wins

Privacy: Every thought you record in Obsidian stays on your disk. When you ask the AI to “summarize my meeting notes,” that processing happens on your CPU/GPU, not a server in Virginia.

Performance: In 2026, local inference on M3/M4 chips is often faster than waiting for a cloud API to respond. You get instant results even when offline.

Cost: You are no longer renting your intelligence. Once the hardware is paid for, your AI costs $0 per month. No “Pro” tiers, no usage limits.

Sovereignty: You own the notes (Markdown files) and the model weights. If the internet goes down or a company goes bankrupt, your Second Brain remains fully functional.

Troubleshooting

”Connection Refused: localhost:11434”

This means Ollama is not running. Ensure the Ollama app is open in your menu bar/system tray and try again.

”Inference is extremely slow”

You are likely running out of VRAM/RAM. Try a smaller model like ollama pull llama4:3b or ensure no other memory-heavy apps (like Chrome) are open.

”AI doesn’t seem to know about my new notes”

Indexing takes time. Check the Copilot settings to see if the indexing process is complete. Large vaults (10,000+ notes) may take several minutes for the initial pass.

Conclusion

You have successfully built a “Second Brain” that respects your digital sovereignty. By combining Obsidian’s local-first architecture with Ollama’s private inference, you’ve created a system that will serve you for decades without ever leaking your data.

Next Step: Learn how to Automate Your Second Brain with Local AI Agents to proactively organize your knowledge.

People Also Ask: How to Build a “Second Brain” Powered by Local AI FAQ

How much RAM do I need for a local AI Second Brain?

For a smooth experience in 2026, we recommend at least 16GB of unified memory on Mac or 12GB of VRAM on PC. While 8GB can work with highly compressed 3B models, the “intelligence” drop is noticeable.

Is Obsidian truly private?

Obsidian stores files locally as Markdown, which is excellent for sovereignty. However, it is not open-source. For a 100% open-source stack, consider Logseq or SilverBullet, though the plugin ecosystem for local AI is currently strongest in Obsidian.

Can I sync my sovereign Second Brain to my phone?

Yes, but avoid cloud providers. Use Syncthing or Tailscale Taildrop to sync your vault directly between devices over your local network or an encrypted P2P mesh.

Further Reading

- 5 Best Linux Operating Systems for Beginners in 2026

- 7 Reasons Why Local AI is Better Than Cloud-Based LLMs

- The Ultimate Guide to De-Googling Your Android Smartphone

Last verified: March 20, 2026 on Apple M3 Max running macOS Sequoia 16.1. Steps verified working as of this date.

About the Author

Anju KushwahaFounder at Relishta

B-Tech in Electronics and Communication EngineeringBuilder at heart, crafting premium products and writing clean code. Specialist in technical communication and AI-driven content systems.

View Profile