Quick Answer: On April 1, 2026, Anthropic accidentally triggered a mass DMCA takedown of over 8,100 GitHub repositories. The company was attempting to remove leaked source code for its Claude Code CLI but inadvertently swept up thousands of legitimate forks, exposing the fragility of the centralized open-source ecosystem.

The Leak: Claude Code Exposed

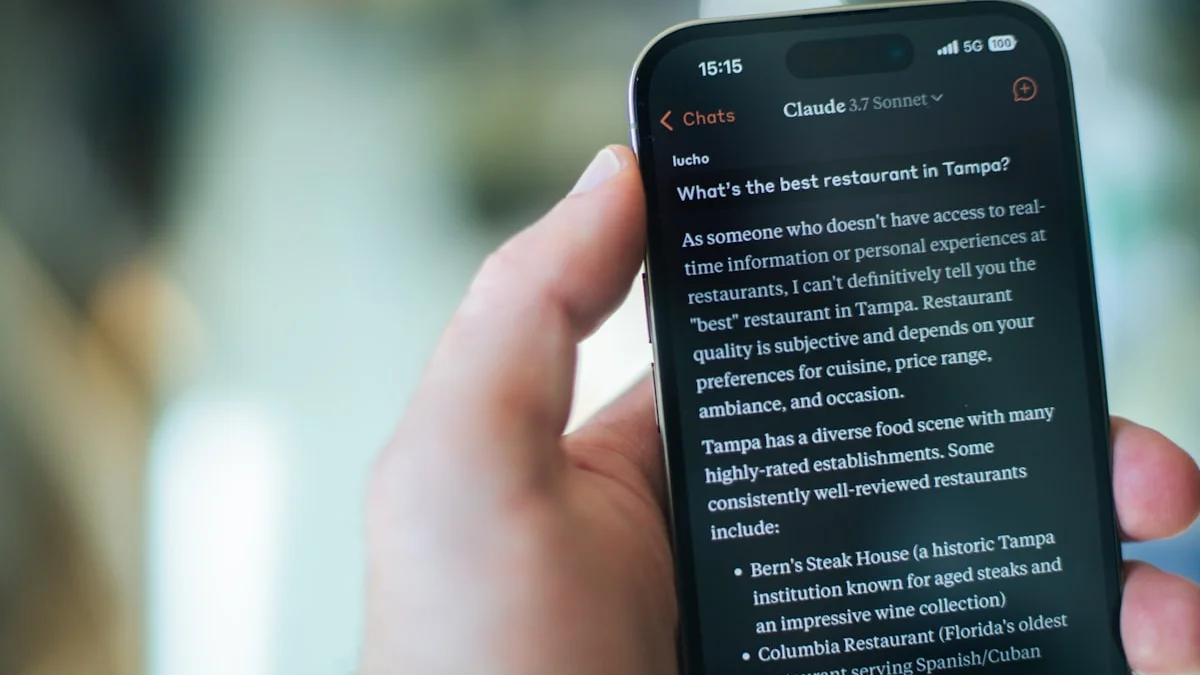

The incident began on Tuesday when a software engineer discovered that Anthropic had inadvertently included the full source code for Claude Code—its category-leading command-line tool—in a public release. Within hours, AI enthusiasts had cloned the repository and were poring over the code to understand how Anthropic optimizes its frontier models for agentic tasks.

Part 1: The Botched Cleanup

In an attempt to contain the leak, Anthropic issued a DMCA takedown notice to GitHub. However, the notice was poorly scoped. According to GitHub’s transparency records, the notice was executed against approximately 8,100 repositories.

The Collateral Damage

The takedown didn’t just target the leaked code; it reached deep into the fork network connected to Anthropic’s legitimate public repositories. Thousands of developers found their projects blocked, even if they contained no proprietary code.

Boris Cherny, Head of Claude Code at Anthropic, later confirmed the move was an accident. The company has since retracted the majority of the notices, narrowing the scope to one primary repository and 96 direct forks containing the leaked source.

Part 2: The IPO Pressure

This “black eye” comes at a particularly sensitive time for Anthropic, which is reportedly preparing for an Initial Public Offering (IPO). For a company that markets itself as the “ethical and safe” alternative to OpenAI, leaking its own source code and then accidentally nuking thousands of community projects raises serious questions about its internal execution and compliance.

Part 3: The Vucense Perspective — The Fragility of the Fork

At Vucense, we advocate for the Sovereign Stack, and this incident perfectly illustrates why relying on centralized platforms like GitHub is a risk for developers.

- Centralized Takedown Power: A single, poorly-written legal notice can instantly silence thousands of developers without prior warning.

- The Case for Decentralized Git: Projects hosted on decentralized protocols (like Radicle or P2P Git mirrors) are immune to such sweeping, accidental takedowns.

- Source Sovereignty: If your code is only as safe as a corporate lawyer’s latest DMCA filing, you don’t truly own your development environment.

The 2026 Decentralized Git Stack: Your Alternative

For developers serious about source sovereignty, consider these platforms:

- Radicle: A peer-to-peer Git hosting platform that uses IPFS and blockchain for repository ownership verification

- Gitea (self-hosted): Full control over your Git infrastructure without relying on corporate servers

- Forgejo: The community-maintained fork of Gitea with enhanced privacy controls

These alternatives ensure your repositories remain accessible even if GitHub (or any centralized platform) suffers a legal crisis. Vucense Take: Anthropic’s “accident” is a reminder that in the age of AI, the lines between public and private code are blurring. Developers must take extra steps to mirror their work across multiple, independent platforms to ensure their digital sovereignty remains intact.

Don’t just fork. Mirror. Stay sovereign.