Marcus Thorne

Local-First AI Infrastructure Engineer

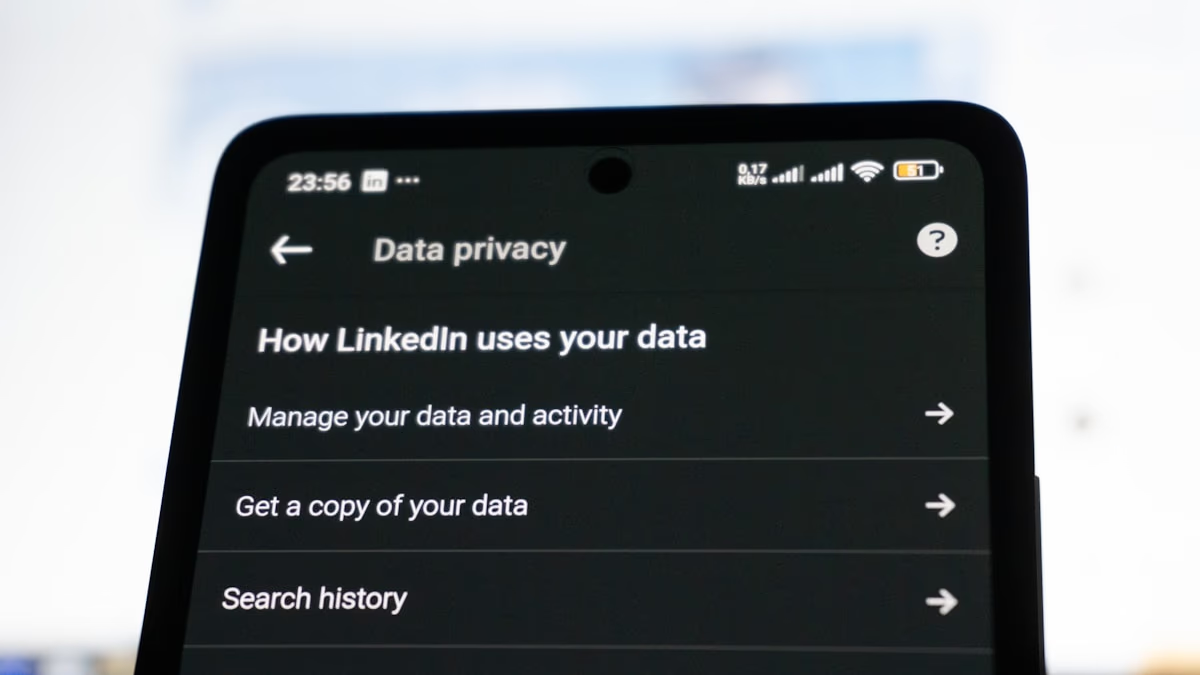

Marcus Thorne is an AI infrastructure engineer focused on optimizing large language models and multimodal AI for on-device deployment without cloud dependencies. With an MSc in machine learning and 7+ years architecting production inference pipelines, Marcus specializes in quantization techniques, ONNX runtime optimization, and efficient model serving on commodity hardware. His expertise spans Llama, Gemma, and other open models, with deep knowledge of techniques like 4-bit quantization, low-rank adaptation (LoRA), and flash attention. Marcus has optimized inference performance across CPU, GPU, and NPU targets, making privacy-first AI accessible on edge devices. At Vucense, Marcus writes about practical on-device AI deployment, inference optimization, and building truly private AI applications that never send data to external servers.